Quick Tour¶

Models¶

There’s a bunch of ready to use trained models for different tasks on the Hub!

🤗Hugging Face Hub Page: https://huggingface.co/hezarai

Let’s walk you through some examples!

Text Classification (sentiment analysis, categorization, etc)

from hezar.models import Model

example = ["هزار، کتابخانهای کامل برای به کارگیری آسان هوش مصنوعی"]

model = Model.load("hezarai/bert-fa-sentiment-dksf")

outputs = model.predict(example)

print(outputs)

[[{'label': 'positive', 'score': 0.812910258769989}]]

Sequence Labeling (POS, NER, etc.)

from hezar.models import Model

pos_model = Model.load("hezarai/bert-fa-pos-lscp-500k") # Part-of-speech

ner_model = Model.load("hezarai/bert-fa-ner-arman") # Named entity recognition

inputs = ["شرکت هوش مصنوعی هزار"]

pos_outputs = pos_model.predict(inputs)

ner_outputs = ner_model.predict(inputs)

print(f"POS: {pos_outputs}")

print(f"NER: {ner_outputs}")

POS: [[{'token': 'شرکت', 'label': 'Ne'}, {'token': 'هوش', 'label': 'Ne'}, {'token': 'مصنوعی', 'label': 'AJe'}, {'token': 'هزار', 'label': 'NUM'}]]

NER: [[{'token': 'شرکت', 'label': 'B-org'}, {'token': 'هوش', 'label': 'I-org'}, {'token': 'مصنوعی', 'label': 'I-org'}, {'token': 'هزار', 'label': 'I-org'}]]

Mask Filling

from hezar.models import Model

model = Model.load("hezarai/roberta-fa-mask-filling")

inputs = ["سلام بچه ها حالتون <mask>"]

outputs = model.predict(inputs, top_k=1)

print(outputs)

[[{'token': 'چطوره', 'sequence': 'سلام بچه ها حالتون چطوره', 'token_id': 34505, 'score': 0.2230483442544937}]]

Speech Recognition

from hezar.models import Model

model = Model.load("hezarai/whisper-small-fa")

transcripts = model.predict("examples/assets/speech_example.mp3")

print(transcripts)

[{'text': 'و این تنها محدود به محیط کار نیست'}]

Text Detection

from hezar.models import Model

from hezar.utils import load_image, draw_boxes, show_image

model = Model.load("hezarai/CRAFT")

image = load_image("../assets/text_detection_example.png")

outputs = model.predict(image)

result_image = draw_boxes(image, outputs[0]["boxes"])

show_image(result_image, "result")

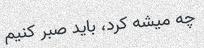

Image to Text (OCR)

from hezar.models import Model

# OCR with CRNN

model = Model.load("hezarai/crnn-base-fa-v2")

texts = model.predict("examples/assets/ocr_example.jpg")

print(f"CRNN Output: {texts}")

CRNN Output: [{'text': 'چه میشه کرد، باید صبر کنیم'}]

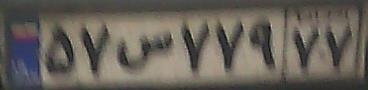

Image to Text (License Plate Recognition)

from hezar.models import Model

model = Model.load("hezarai/crnn-fa-license-plate-recognition")

plate_text = model.predict("assets/license_plate_ocr_example.jpg")

print(plate_text) # Persian text of mixed numbers and characters might not show correctly in the console

[{'text': '۵۷س۷۷۹۷۷'}]

Image to Text (Image Captioning)

from hezar.models import Model

model = Model.load("hezarai/vit-roberta-fa-image-captioning-flickr30k")

texts = model.predict("examples/assets/image_captioning_example.jpg")

print(texts)

[{'text': 'سگی با توپ تنیس در دهانش می دود.'}]

We constantly keep working on adding and training new models and this section will hopefully be expanding over time ;)

Word Embeddings¶

FastText

from hezar.embeddings import Embedding

fasttext = Embedding.load("hezarai/fasttext-fa-300")

most_similar = fasttext.most_similar("هزار")

print(most_similar)

[{'score': 0.7579, 'word': 'میلیون'},

{'score': 0.6943, 'word': '21هزار'},

{'score': 0.6861, 'word': 'میلیارد'},

{'score': 0.6825, 'word': '26هزار'},

{'score': 0.6803, 'word': '٣هزار'}]

Word2Vec (Skip-gram)

from hezar.embeddings import Embedding

word2vec = Embedding.load("hezarai/word2vec-skipgram-fa-wikipedia")

most_similar = word2vec.most_similar("هزار")

print(most_similar)

[{'score': 0.7885, 'word': 'چهارهزار'},

{'score': 0.7788, 'word': '۱۰هزار'},

{'score': 0.7727, 'word': 'دویست'},

{'score': 0.7679, 'word': 'میلیون'},

{'score': 0.7602, 'word': 'پانصد'}]

Word2Vec (CBOW)

from hezar.embeddings import Embedding

word2vec = Embedding.load("hezarai/word2vec-cbow-fa-wikipedia")

most_similar = word2vec.most_similar("هزار")

print(most_similar)

[{'score': 0.7407, 'word': 'دویست'},

{'score': 0.7400, 'word': 'میلیون'},

{'score': 0.7326, 'word': 'صد'},

{'score': 0.7276, 'word': 'پانصد'},

{'score': 0.7011, 'word': 'سیصد'}]

For a full guide on the embeddings module, see the embeddings tutorial.

Datasets¶

You can load any of the datasets on the Hub like below:

from hezar.data import Dataset

# The `preprocessor` depends on what you want to do exactly later on. Below are just examples.

sentiment_dataset = Dataset.load("hezarai/sentiment-dksf", preprocessor="hezarai/bert-base-fa") # A TextClassificationDataset instance

lscp_dataset = Dataset.load("hezarai/lscp-pos-500k", preprocessor="hezarai/bert-base-fa") # A SequenceLabelingDataset instance

xlsum_dataset = Dataset.load("hezarai/xlsum-fa", preprocessor="hezarai/t5-base-fa") # A TextSummarizationDataset instance

alpr_ocr_dataset = Dataset.load("hezarai/persian-license-plate-v1", preprocessor="hezarai/crnn-base-fa-v2") # An OCRDataset instance

flickr30k_dataset = Dataset.load("hezarai/flickr30k-fa", preprocessor="hezarai/vit-roberta-fa-base") # An ImageCaptioningDataset instance

commonvoice_dataset = Dataset.load("hezarai/common-voice-13-fa", preprocessor="hezarai/whisper-small-fa") # A SpeechRecognitionDataset instance

...

The returned dataset objects from load() are PyTorch Dataset wrappers for specific tasks and can be used by a data loader out-of-the-box!

You can also load Hezar’s datasets using 🤗Datasets:

from datasets import load_dataset

dataset = load_dataset("hezarai/sentiment-dksf")

For a full guide on Hezar’s datasets, see the datasets tutorial.

Training¶

Hezar makes it super easy to train models using out-of-the-box models and datasets provided in the library.

from hezar.models import BertSequenceLabeling, BertSequenceLabelingConfig

from hezar.data import Dataset

from hezar.trainer import Trainer, TrainerConfig

from hezar.preprocessors import Preprocessor

base_model_path = "hezarai/bert-base-fa"

dataset_path = "hezarai/lscp-pos-500k"

train_dataset = Dataset.load(dataset_path, split="train", tokenizer_path=base_model_path)

eval_dataset = Dataset.load(dataset_path, split="test", tokenizer_path=base_model_path)

model = BertSequenceLabeling(BertSequenceLabelingConfig(id2label=train_dataset.config.id2label))

preprocessor = Preprocessor.load(base_model_path)

train_config = TrainerConfig(

output_dir="bert-fa-pos-lscp-500k",

task="sequence_labeling",

device="cuda",

init_weights_from=base_model_path,

batch_size=8,

num_epochs=5,

metrics=["seqeval"],

)

trainer = Trainer(

config=train_config,

model=model,

train_dataset=train_dataset,

eval_dataset=eval_dataset,

data_collator=train_dataset.data_collator,

preprocessor=preprocessor,

)

trainer.train()

trainer.push_to_hub("bert-fa-pos-lscp-500k") # push model, config, preprocessor, trainer files and configs

Want to go deeper? Check out the training tutorials.